Documentation Index

Fetch the complete documentation index at: https://docs.lerian.studio/llms.txt

Use this file to discover all available pages before exploring further.

Why this matters

Every transaction in Midaz generates balance operations that need to be persisted. In the default (synchronous) mode, each message triggers an individual database insert. That’s fine for moderate volumes, but at scale — thousands of transactions per second — it becomes the bottleneck. The Bulk Recorder changes this by accumulating messages and writing them in batches. Fewer round trips to PostgreSQL, less lock contention, and significantly higher throughput. For high-volume operations like mass payouts, batch settlements, or real-time payment processing, this is the difference between a system that keeps up and one that doesn’t. For broader strategies on scaling Midaz, see Scalability strategies.

How it works

The Bulk Recorder sits between the RabbitMQ consumer and the database layer. Instead of inserting each message immediately, it collects them in a buffer and flushes under two conditions:

- Batch size reached — the buffer fills up to the configured size.

- Timeout elapsed — the configured flush timeout expires, even if the buffer isn’t full.

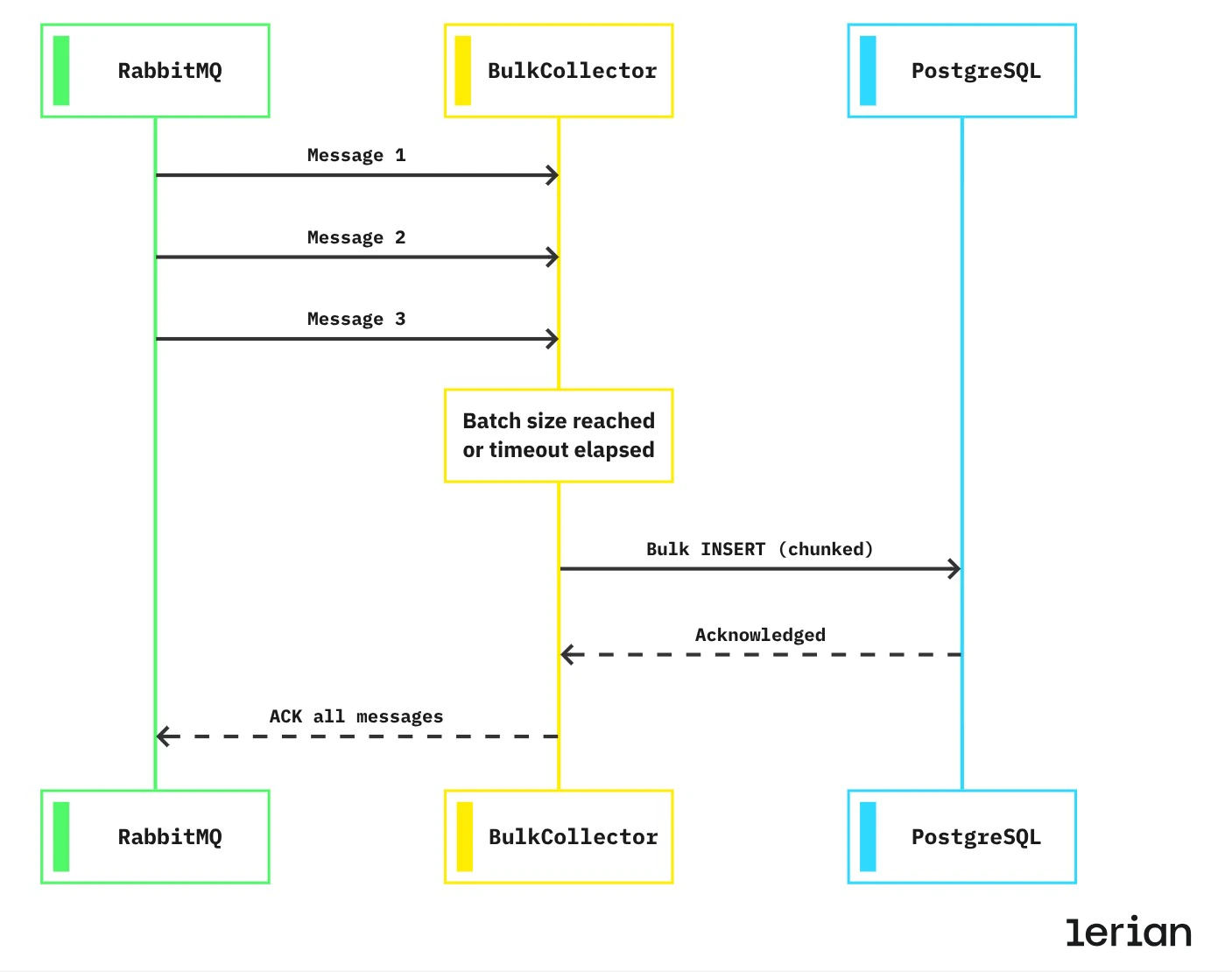

- RabbitMQ delivers messages to the BulkCollector one at a time — Message 1, Message 2, and so on up to Message N.

- The BulkCollector holds them in memory instead of writing each one immediately. It keeps collecting until the batch size is reached or the flush timeout expires.

- The BulkCollector sends a chunked bulk INSERT to PostgreSQL. Large batches are automatically split into chunks that respect PostgreSQL’s parameter limits. Each chunk uses

ON CONFLICT (id) DO NOTHING, so retries and duplicate deliveries are handled safely. - PostgreSQL acknowledges the write, confirming the data is persisted.

- The BulkCollector acknowledges all messages back to RabbitMQ in a single ACK, releasing them from the queue together.

Enabling Bulk Recorder

Bulk mode requires two conditions to be active simultaneously:

Configuration

| Variable | Description | Default |

|---|---|---|

BULK_RECORDER_ENABLED | Enable or disable bulk mode. | true |

BULK_RECORDER_SIZE | Number of messages to accumulate before flushing. 0 = auto-calculated. | 0 (auto) |

BULK_RECORDER_FLUSH_TIMEOUT_MS | Maximum time (ms) to wait before flushing an incomplete batch. | 100 |

BULK_RECORDER_MAX_ROWS_PER_INSERT | Maximum rows per INSERT statement sent to PostgreSQL. | 1000 |

Auto-calculated batch size

WhenBULK_RECORDER_SIZE is set to 0 (the default), the batch size is derived automatically:

Tuning for your workload

The two main levers are batch size and flush timeout. The right balance depends on whether you prioritize latency or throughput.

Low latency (real-time processing)

Keep batches small and timeouts short. Messages are persisted quickly, even if batches aren’t full.High throughput (batch operations)

Larger batches and longer timeouts maximize database efficiency. Ideal for mass payouts, end-of-day settlements, or migration workloads.Safety guarantees

The Bulk Recorder is designed to be safe under all conditions:

Idempotency

Every bulk insert usesON CONFLICT (id) DO NOTHING. If a message is delivered twice — due to a retry, redelivery, or network hiccup — the duplicate is silently discarded. No data corruption, no constraint violations.

Deadlock prevention

Before each bulk insert, record IDs are sorted. This ensures all concurrent writers acquire locks in the same order, eliminating the most common source of PostgreSQL deadlocks in high-concurrency scenarios.Internal chunking

Large batches are automatically split into chunks that fit within PostgreSQL’s 65,535 parameter limit per query:| Record type | Columns per row | Rows per chunk | Parameters per chunk |

|---|---|---|---|

| Transaction | 15 | 1,000 | 15,000 |

| Operation | 30 | 1,000 | 30,000 |

BULK_RECORDER_MAX_ROWS_PER_INSERT if you want to adjust the chunk size — the default of 1,000 rows is optimal for most deployments.

When to use

Use Bulk Recorder when:

- You process high volumes of transactions (hundreds or thousands per second).

- Your workload includes batch operations like mass payouts, settlements, or data migrations.

- You’re already using async transaction processing (

RABBITMQ_TRANSACTION_ASYNC=true). - You want to reduce database load and connection pressure.

- Your volume is low enough that individual inserts aren’t a bottleneck.

- You need strict per-message ordering guarantees that batch processing would break.

- You’re debugging transaction processing and want simpler, message-by-message flow.